Product design: Facebook Parental Supervision

Facebook Parental Supervision

2023–2024 · Meta / Facebook

Designing supervision teens actually say yes to

A parent wants to keep their teen safe online. A teen wants to be trusted. These two needs pull in opposite directions — and both are valid.

With over 10 million active teens on Facebook and 700K new teen sign-ups daily, the pressure was real. Research showed 21% of guardians blocked Facebook entirely for their teens — not because the platform was inherently unsafe, but because they lacked any visibility into their teens experience. Meanwhile, 65% said they would allow access if they had supervision tools.

The question was not whether to build supervision. It was: how do you design oversight that teens actually accept?

My role

I established the design principles that shaped the product, navigated the tension between parental control and teen autonomy, and iterated through multiple rounds of concept testing that directly informed what shipped — and what we deliberately chose not to build.

Beginning in May 2024, this work evolved into Family Center, Metas unified supervision hub across Facebook, Instagram, Messenger, and Meta Quest.

As Design Lead on Facebooks Integrity and Privacy team, I drove the creation and strategy for Parental Supervision from its earliest concepts through MVP launch. I organized and led a week-long cross-functional design sprint in Seattle (Mar 2023) with designers, product managers, and researchers from Privacy, Integrity, and the cross-Meta Family Center team.

Where teens actually spend their time

Before we could design supervision, we needed to understand how teens actually use Facebook. The data painted a clear picture. Newsfeed, Videos, and Messenger collectively take a lions share of teen time. This meant supervision tools needed to address the full breadth of how teens engage — not just one surface.

Teen Audience — Surface Breakdown

Newsfeed

91.96%

of teen users visit Newsfeed, accounting for 18.89% of all time spent

Videos

54.19%

visit Videos, which takes the largest share of time at 27.88%

Messenger

61.69%

visit Messenger, accounting for 18.71% of time spent

Two families, two failures

During the sprint, we worked with four research-based personas that grounded every design decision in real human stories. Two stood out as defining for the project:

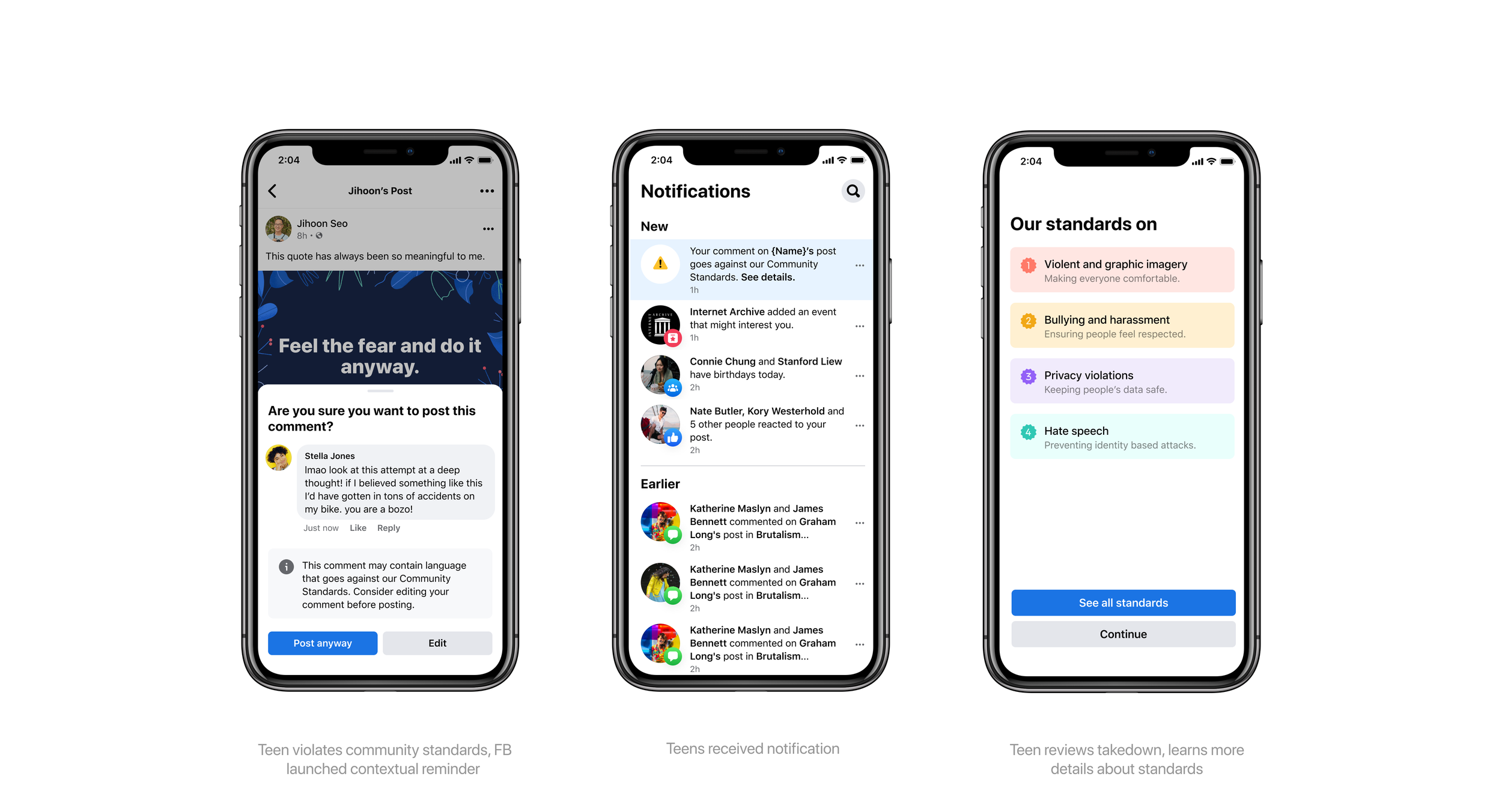

Jay — Family Troubles

Jay is 13, lives in an unstable housing situation, and loves gaming videos. He gets drawn into Facebooks recommendation algorithm — borderline content leads to more borderline content. He joins a pile-on in the comments, bullying another user. His school finds out. His parents find out.

With no supervision tools to set boundaries earlier, Jays parents resort to banning Facebook entirely. But Jay sneaks back on using a public computer. His behavior escalates. The ban has created a relationship around technology built on distrust rather than conversation — exactly the opposite of what the family needed.

These stories crystallized the core insight: without proper supervision tools, families default to extremes — either zero visibility or total intrusion. Both fail. Connors parents see everything and violate his privacy. Jays parents see nothing and lose the ability to guide him. We needed to design the middle ground.

Connor — Exploring Identity

Connor is a gay teen exploring identity and self-expression online. Hes popular and outgoing with friends, but guarded at home — he hasnt come out to his parents. He joins LGBTQ+ groups on Facebook to find community and connect with people whove been in his exact situation.

When his parents notice his increased Facebook usage, they grow concerned. Without any supervision tools, they resort to the only option available: forcing Connor to show them his feed. This reveals his identity — something he wanted to share on his own terms. The result is a rift between Connor and his parents. Connor loses both his privacy and his community.

A system built on consent

Supervision is a two-sided, opt-in system. A parent invites their teen. The teen must accept. Either party can end supervision at any time.

This consent model was a deliberate choice — a tool teens reject protects no one. Once connected, parents gain access to insights and configurable controls through Family Center. Teens see full transparency into what their parents can view and do.

Supervision User flow

Four design challenges at the heart of teen supervision

02 Provide opportunities for safe exploration

Social media helps teens explore their identities during a transitional phase, allowing them to express themselves in less vulnerable ways — through multiple accounts, disappearing content, or avatars. Guardians seek non-invasive tools for safe exploration, but overly intrusive methods lead to rejection. The challenge is balancing supervision with respect for teens' privacy and autonomy.

01 Adapt to teens as they grow

Teens represent a diverse user group with varying privacy needs based on age and maturity, which evolve as they grow. As teens mature, parents' roles shift from setting strict limits to fostering trust and open communication. Designing for these changing dynamics is key to creating supervision tools that add value for both teens and guardians over time.

04 Enhance parent-teen communication

Conversations about social media often become adversarial. Teens feel distrusted when oversight is too high, leading to tension and reluctance to share. While guardians are mindful of privacy, they feel that objective third-party insights from apps can help de-escalate these discussions and open healthier dialogue between families.

03 Support guardians and demystify social media

Less than half of guardians feel comfortable navigating basic tech tasks. Even those with technical knowledge struggle to grasp the nuances of their teens' social media experiences. Teens, in turn, wish their guardians better understood their concerns, often feeling hesitant to discuss social tech because they believe their guardians "won't understand."

Sprint Concepts

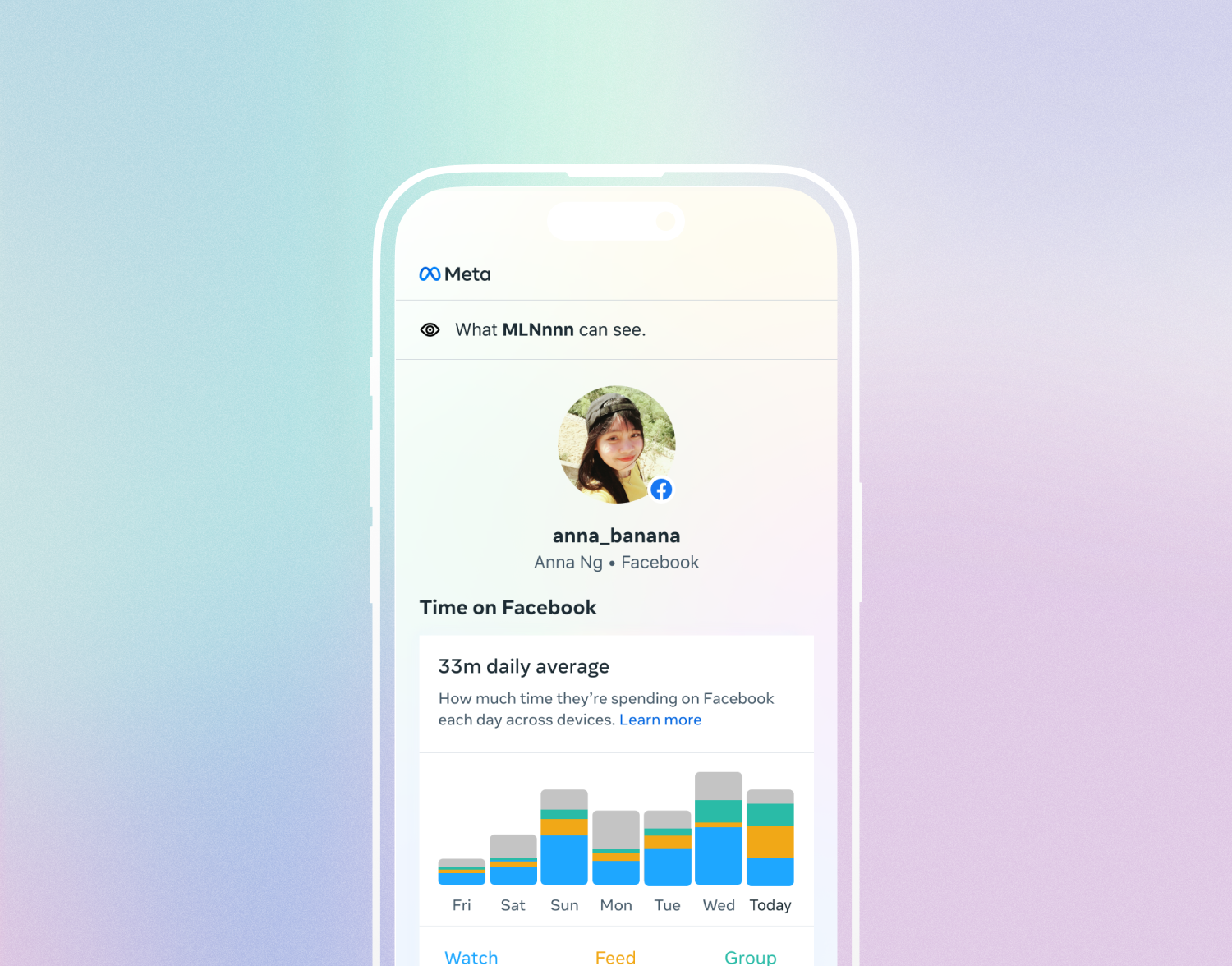

Time Spent / P0

Problem: Only showing general screen time information to parents without details is not very helpful for time limits set up.

Opportunity area: - Limits for time spent - Data on where / how someone is spending time

Solution: To assist parents in making better time management decisions with their teens, provide a breakdown of detailed screen time information from teen activity and when it is most used.

Connections / P0

Problem: Parents want visibility into the connections their teen is making on Facebook

Opportunity area: Provide parents with supervision into the connections their teen is making on Facebook

Solution: Create a Connections section with visibility into Friends, Groups, Pages, Events and Pages their teen connects with.

Visibility into Security Settings / P0

Problem: Some teens may be new to the internet and not know best practices around account safety

Opportunity area: Provide parents with insight into the strength of their teens account security measures

Solution: Show strength of password, 2fac, and let parent know when unrecognized device signs in.

Visibility into Privacy Settings / P0

Problem: Some entities on Facebook that a teen would connect with are indicators of identity and interest which a teen may want more privacy over

Opportunity area: Provide a way for teens and parents to balance privacy with supervision

Solution: In the Teen onboarding flow, allow selections of which entities they want to share with their parents with Friends being selected automatically.

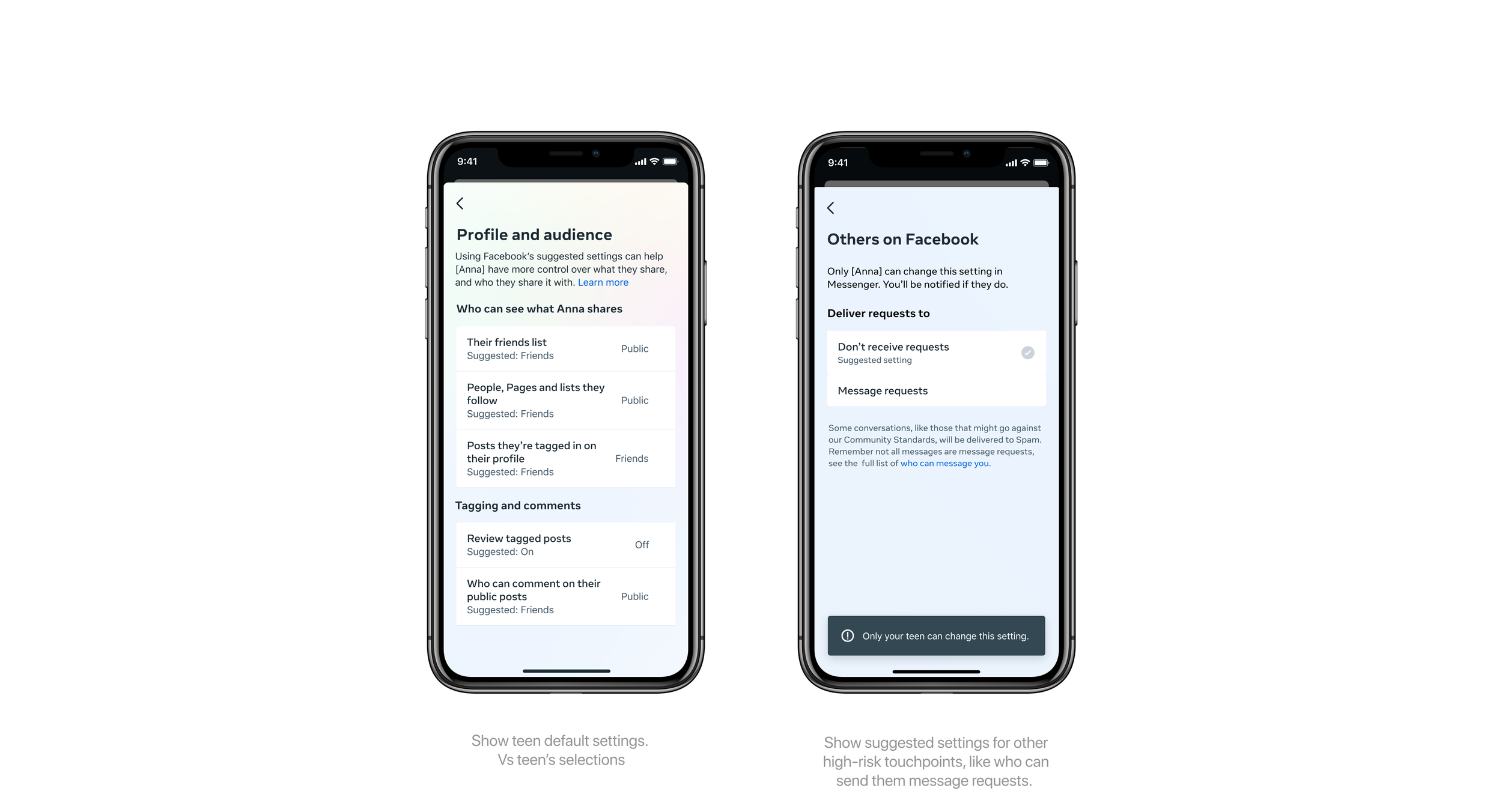

Settings recommendations / P0

Problem: Parents and teens may be unaware of our default privacy settings for teens, which minimize unwanted interactions and content exposure. Parents may also want visibility into the settings their teen has chosen, which is consistent with other supervision experiences.

Opportunity area: Raise awareness of our suggested settings to parents and highlight opportunities for teens to use safer settings.

Solution: Show default privacy settings in Family Center, alongside the teen’s selection for each setting.

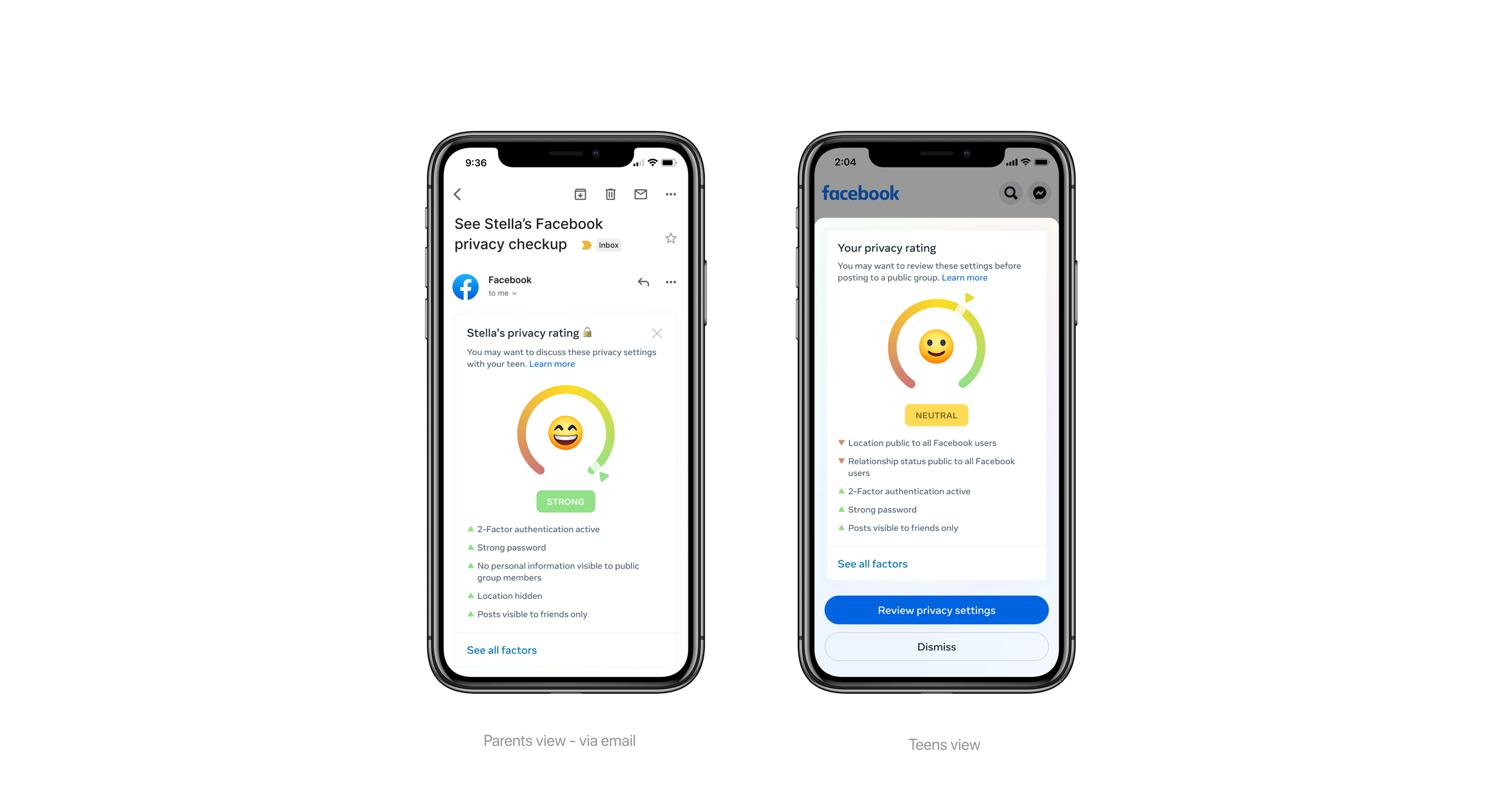

Privacy Rating / P1

Problem: Privacy settings can seem like an insurmountable configuration to address to people who haven’t visited the tool. They may not know what’s normal or what they should work towards.

Opportunity area: Privacy Checkup Expansion

Solution: Give explicit guidance on what marks are potentially disclosing too much, and nudge parents and teens to evaluate these settings at pivotal ‘first time’ moments in their Facebook experience.

Teen’s posts Insights / P1

Problem: Unwanted content expose problem for teens.

Opportunity area: User Insights

Solution: Teens will be given post insights and reminded of their privacy settings. Assist teens in avoiding further harm and provides the parents' side transparency.

Trigger Community Standards Education / P2

Problem: Our community standards are often tucked away and not understood before engaging in activities on Facebook.

Opportunity area: Trigger education

Solution: Bring community standards education into the product experience in a way that prompts teen reflection and discussion between families.

Content Recommendation Reset / P2

Problem: Often people are intentionally or unintentionally drawn into rabbit holes or patterns of recommendations that can be harmful over time

Opportunity area: Sensitive content control

Solution: Give visibility into when this behavior might be forming, and provide corrective measures in the form of, Nudges, ‘New to you’ type content diversification and Resources for content evaluation

MVP Overview

Parents Experience: Focus on Family Center

The Family Center is the central area of the parent's side supervision experience. We are providing one place for parents able to gather enough data about their teens. Providing the right level of detail is the key. The intention is to promote conversation and find a solution as a family.

Teens Experience: Contextual & in the moment

For teenagers, Facebook supervision occurs frequently and in a more interesting setting when it is contextual.